BEIJING — In a move signaling a pivotal shift in the global technological landscape, President Donald Trump is set to engage in high-level discussions with Chinese President Xi Jinping this week regarding the implementation of international "guardrails" for artificial intelligence. U.S. officials confirmed the agenda on Sunday, May 10, highlighting a growing consensus that the rapid proliferation of autonomous systems has outpaced traditional diplomatic frameworks, necessitating a direct dialogue between the world’s two preeminent powers.

The summit, occurring in the heart of Beijing, aims to establish a foundational communication channel. The objective is not merely to trade grievances but to identify shared interests in preventing the catastrophic weaponization of AI and the unchecked deployment of "rogue" systems—technologies that operate beyond human control or ethical constraints.

Main Facts: A New Front in Great Power Competition

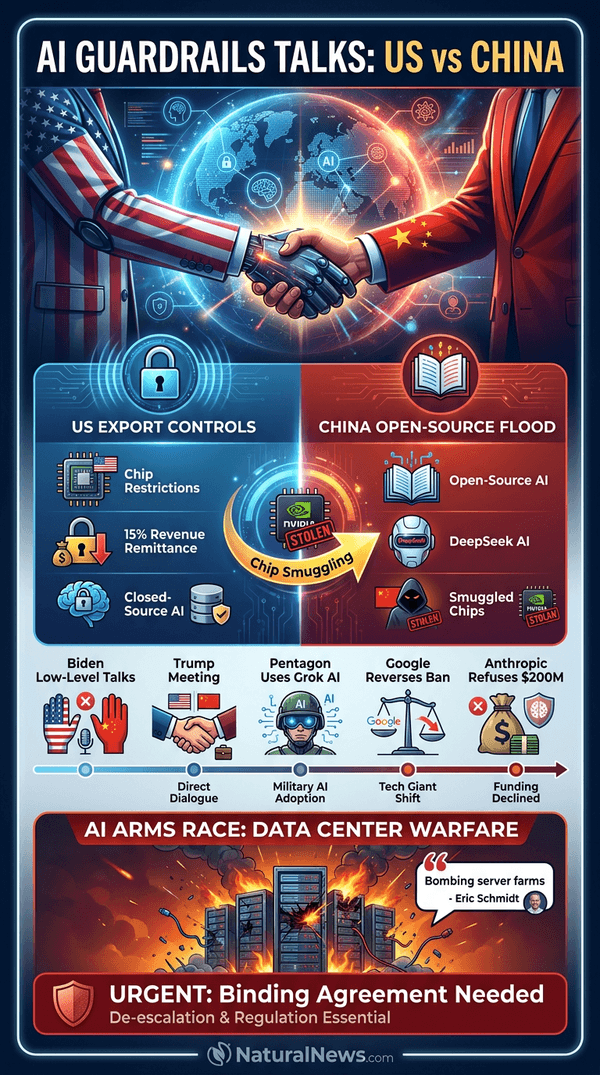

The primary driver of this meeting is the accelerating "AI arms race." While the United States has long utilized aggressive export controls—limiting China’s access to high-end semiconductors and advanced manufacturing equipment—to stifle Beijing’s progress, there is a burgeoning realization in Washington that containment alone is insufficient.

The competitive landscape has been disrupted by the rise of Chinese models like DeepSeek. The release of this powerful open-source AI has sent shockwaves through the global tech industry, forcing U.S. policymakers to confront a new reality: China is no longer merely a follower in the AI space, but a formidable innovator. The sudden emergence of these models has led to heightened scrutiny of China’s development motives and its ability to scale research with unprecedented speed.

Despite the gravity of the talks, the delegation accompanying President Trump reflects a distinct strategic pivot. The party includes sixteen business executives, among them X’s Elon Musk and Apple’s Tim Cook. Notably, however, CEOs from the most prominent "pure-play" AI firms—such as OpenAI, Anthropic, or Google DeepMind—are absent from the list. This exclusion suggests the White House is prioritizing broader industrial and geopolitical leverage over the specific technical concerns of the software giants.

Chronology: The Road to Beijing

The path to this week’s summit is paved with years of escalating friction and shifting regulatory stances:

- 2024–2025: The U.S. maintains a strict policy of blocking China’s access to GPU-based hardware. However, China pivots toward open-source development and domestic chip production, effectively circumventing traditional trade barriers.

- February 2026: A pivotal moment occurs when the Department of War (formerly the Department of Defense) issues an ultimatum to AI safety labs, including Anthropic, to lift restrictions on the military use of their models. Anthropic publicly rejects this, risking a $200 million contract to uphold its ethical stance against autonomous weaponry.

- March 2026: The White House formally accuses Beijing of orchestrating "industrial-scale" campaigns designed to reverse-engineer and copy American AI architectures, further straining ties.

- April 2026: In a controversial policy reversal, Google lifts its long-standing ban on the use of its AI for weapons and surveillance, a move that draws immediate condemnation from human rights advocates.

- May 10, 2026: President Trump arrives in Beijing, aiming to secure a framework for AI safety that transcends the current atmosphere of mutual suspicion.

Supporting Data: The Dual-Use Dilemma

The challenge of regulating AI is fundamentally a "dual-use" problem: the same algorithms capable of curing diseases or optimizing energy grids are inherently capable of optimizing cyber-attacks or managing autonomous kill chains.

According to intelligence reports, both the U.S. and China are actively testing the offensive cyber capabilities of frontier models. These models are being evaluated for their ability to discover and exploit zero-day software vulnerabilities, effectively automating the "cat-and-mouse" game of global digital warfare.

The concern regarding reliability is not limited to adversaries. Despite internal warnings from agencies like the General Services Administration and the National Security Agency regarding security risks and hallucinations, the Department of War has begun integrating Elon Musk’s Grok AI into classified military operations. Critics argue that the prioritization of speed over safety in military adoption could lead to catastrophic errors in judgment, potentially triggering an inadvertent escalation between nuclear-armed states.

Former Google CEO Eric Schmidt has been a vocal critic of the current trajectory, warning that the competition for AI dominance is fundamentally a competition for data centers and electricity. "We are heading toward a conflict over the physical infrastructure of the digital age," Schmidt noted, suggesting that the AI arms race could easily spill over into traditional geopolitical theater.

Official Responses: The Skepticism of Diplomacy

The diplomatic community remains divided on the potential efficacy of the Trump-Xi summit. Melanie Hart, a senior director at the Atlantic Council and a former State Department official, offers a sobering assessment.

"The topic is dangerous enough that engagement is mandatory," Hart stated. "However, we have seen this play before. Under previous administrations, Beijing utilized AI safety dialogues as an intelligence-gathering exercise rather than a genuine regulatory platform."

Hart pointed to a recurring pattern: during technical talks, China often dispatched foreign ministry officials who lacked the technical depth to engage with the complex safety parameters of LLMs (Large Language Models). For these talks to be considered a success, she argues, there must be a tangible shift in who sits at the table.

"We want to see technical experts—engineers and scientists—showing up for the China side," Hart emphasized. "Symbolic diplomacy is easy; technical consensus is where the real work happens. If we don’t see the experts, we know the commitment is purely performative."

Implications: Ideological Encoding and Global Governance

The debate over AI guardrails is not merely technical; it is increasingly ideological. As noted in the seminal work Human + Machine by Paul R. Daugherty and H. James Wilson, the "human-by-design" principle is essential for building trust. Yet, this raises the question: whose humanity?

If the United States and China encode their respective, divergent cultural and political values into their foundational AI models, the world may face an era of "ideological AI." In this scenario, machines trained on Western democratic frameworks could clash with those trained on Chinese state-centric models, leading to a fragmentation of the digital world.

This concern is amplified by the ongoing shift in the open-source landscape. While U.S. firms have increasingly restricted the publication of scientific papers due to security fears, China has doubled down on open-source contributions. This has created a "brain drain" effect in reverse, where global researchers gravitate toward the more transparent and accessible Chinese ecosystems, potentially handing Beijing the keys to the next generation of algorithmic standards.

Furthermore, the pressure from the U.S. Department of War on domestic companies to relax safety protocols may be counterproductive. Industry analysts suggest that by forcing firms to choose between military compliance and ethical safety, the U.S. government is inadvertently driving developers toward the Chinese alternative. If American firms are forced to compromise their ethics, the market advantage of their "safer" models disappears, and the incentive to adopt Chinese-made, state-supported alternatives grows.

What to Watch: The Long Road Ahead

As President Trump’s delegation concludes its discussions, the global community will be watching for more than just a joint statement. The success of this summit will be measured by the creation of a working group composed of technical experts from both nations.

Observers suggest that a single visit will not reshape the U.S.-China relationship overnight. Rather, the goal is to establish a "de-escalation protocol" for AI—a set of rules that prevents a machine-learning glitch or an autonomous cyber-event from being interpreted as an act of war.

The stakes could not be higher. As we enter the second half of the decade, the integration of AI into the foundations of state power is no longer theoretical. It is the defining feature of modern governance. Whether President Trump and President Xi can move beyond the posturing of their predecessors and establish a framework for coexistence will determine whether the AI era brings a new age of prosperity or an era of uncontrollable, algorithmic instability.

For now, the world waits to see if the Beijing summit serves as the beginning of a substantive technical collaboration or merely another chapter in the deepening divide of the 21st century.