As health systems across the United States scramble to modernize in the face of rising administrative costs and shifting reimbursement models, they are increasingly turning to the high-stakes world of big data analytics. At the forefront of this digital transformation is Palantir Technologies, the Silicon Valley behemoth known for its deep-seated history in intelligence and defense contracting. However, as the company expands its footprint into the delicate ecosystem of patient care, it has met an formidable opponent: National Nurses United (NNU).

Representing more than 225,000 registered nurses, the NNU has launched a nationwide campaign to block, or at least heavily scrutinize, the integration of Palantir’s proprietary software into hospital operations. The conflict represents a fundamental clash between two visions for the future of medicine: one prioritizing algorithmic efficiency and the other centering on clinical autonomy, patient privacy, and the human element of care.

The Collision of Data and Care: Main Facts

The core of the dispute lies in the nature of Palantir’s platforms, such as "Foundry," which are designed to aggregate massive, disparate datasets to uncover patterns and optimize operations. While health systems champion these tools for their ability to streamline complex tasks—such as navigating prior authorization requirements, managing supply chains, and reducing the backlog of insurance denials—nurses view them with deep suspicion.

Nurses and labor advocates argue that when a company rooted in the "technological republic" philosophy of intelligence gathering begins to manage hospital workflows, the priorities of the boardroom inevitably bleed into the bedside. The NNU has highlighted concerns that these systems could be used to dictate staffing levels, influence clinical decision-making through algorithmic "suggestions," and jeopardize the sanctity of sensitive patient data, particularly for vulnerable populations such as immigrants and those with stigmatized health conditions.

A Chronology of Growing Resistance

The friction between healthcare providers and the software giant has escalated rapidly over the last several months, evolving from internal skepticism to high-profile public demonstrations.

- Early 2026: Palantir records significant revenue growth, bolstered by its expanding "Artificial Intelligence Platform" (AIP) and its strategic pivot toward commercial healthcare, prompting increased scrutiny from civil liberties groups and labor organizations.

- April 2026: The conversation turns heated in the United Kingdom, where the NHS faces intense political backlash over its "Federated Data Platform" contract with Palantir. Members of Parliament and privacy advocates demand the contract be scrapped, citing concerns over data governance and the company’s political leanings.

- May 1, 2026 (May Day): The protest movement gains significant traction in the U.S. as the Maine State Nurses Association (MSNA) and community allies hold a major rally in Portland. The demonstration serves as a direct demand for MaineHealth to terminate its contract with Palantir.

- Mid-May 2026: Protests spread to Tennessee, targeting the collaboration between large health systems and Palantir. These demonstrations specifically call out members of Congress who have accepted campaign contributions from the tech company, alleging a conflict of interest in the regulation of healthcare AI.

The MaineHealth Case Study: A Microcosm of the Conflict

The protests in Maine provide a stark example of the disconnect between hospital administration and the nursing staff. When MaineHealth partnered with Palantir, the stated goal was to improve the efficiency of insurance claims, theoretically freeing up clinicians to spend more time with patients rather than navigating bureaucratic red tape.

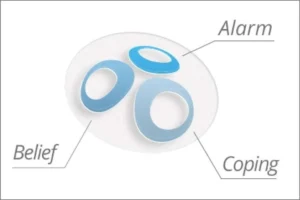

However, Janel Crowley, a registered nurse and Board Member of the MSNA, has publicly stated that the hospital has failed to provide sufficient transparency regarding the scope of the software’s access. The concern is not merely about administrative efficiency; it is about the "black box" nature of the algorithm. Nurses are worried that if an AI determines that a patient’s insurance claim is likely to be denied, or if it predicts a patient’s "risk score," that data could subconsciously bias a nurse’s or doctor’s approach to care.

Furthermore, the community impact cannot be overstated. In Portland, nurses have expressed visceral fear for their immigrant patients, worrying that if hospital data is ever commodified or accessed by third parties, it could be weaponized to track or marginalize individuals who are already hesitant to seek medical help.

Supporting Data and the Regulatory Landscape

The debate over Palantir is not happening in a vacuum. It is part of a larger, global conversation about the governance of Artificial Intelligence in healthcare. The American Academy of Nursing has issued a formal position statement calling for mandatory transparency disclosures. The academy argues that without a standardized regulatory framework—much like the FDA’s oversight of pharmaceuticals—hospitals are essentially conducting a live experiment on patients and staff.

Data from the healthcare industry suggests that while administrative costs are ballooning—driven by the complexities of the U.S. insurance system—the solution may not be more opaque software, but rather systemic reform. Critics like Medact, a UK-based health justice organization, point out that vendor contracts are often cloaked in non-disclosure agreements, preventing the public from understanding exactly what data is being collected and how the software’s logic is being applied to human lives.

Official Responses and Corporate Philosophy

Palantir has consistently defended its role in the healthcare sector by emphasizing its commitment to "operational excellence." Executives argue that in an era of aging populations and shrinking margins, hospitals that fail to adopt advanced analytics will be unable to survive. The company positions its software as a neutral, high-capacity tool that allows administrators to "see" the entire health system in real-time, thereby reducing waste and improving outcomes.

MaineHealth, in responding to the protests, has echoed these sentiments, asserting that its platform operates under the most stringent privacy safeguards available and that it is explicitly prohibited from interfering with clinical decision-making.

However, observers point to the "Technological Republic" manifesto posted by Palantir’s leadership on X as evidence of a corporate culture that values centralized power and technocratic solutions over traditional democratic or consensus-based governance. This philosophy, critics argue, is fundamentally at odds with the values of the nursing profession, which relies on human judgment, empathy, and patient advocacy.

The UK Experience: Lessons in Public Trust

The situation in the UK’s National Health Service (NHS) offers a cautionary tale. While the Federated Data Platform was marketed as a miracle cure for waiting lists and operational inefficiency, it became a political lightning rod. The concern was never purely technical; it was about the fundamental question of trust. When a company that made its name building tools for the intelligence community is tasked with managing the most intimate data of a nation, the public reaction is rarely one of blind acceptance. The UK experience suggests that for any digital health initiative to succeed, it must be developed with, rather than imposed upon, the clinicians who do the work and the patients who rely on the system.

Implications for the Future of Digital Health

As we look toward the future, the integration of AI into healthcare appears inevitable. Remote patient monitoring, predictive diagnostics, and AI-assisted care coordination offer legitimate opportunities to improve health outcomes. Yet, the backlash against Palantir serves as a vital reminder that technical capability is not synonymous with social license.

The implications for the industry are profound:

- Transparency as a Prerequisite: Future software deployments in healthcare will likely require full audits and public disclosure of algorithms to gain the trust of nursing staff and patient advocates.

- Clinician-Led Design: Technologies designed to "optimize" care must be co-created with the nurses and doctors who understand the nuance of the patient experience.

- Governance Over Growth: Hospitals facing financial pressure due to Medicaid changes or subsidy rollbacks must ensure that their pursuit of efficiency does not compromise the ethical standards of their institutions.

Conclusion: The Neutral Tool Paradox

The controversy surrounding Palantir inevitably recalls J.R.R. Tolkien’s "palantíri"—the seeing-stones. In the lore, these were not inherently evil; they were tools of communication created for the greater good, yet their utility was entirely dependent on the intent and wisdom of those who looked into them.

Today’s data analytics tools face the same paradox. They are capable of monumental good, yet without clear, democratic governance and a profound respect for the human element of medicine, they risk becoming instruments of alienation rather than healing. For the thousands of nurses currently marching in the streets, the message is clear: when it comes to the health of the community, data efficiency can never take precedence over the fundamental rights and autonomy of the patient. The digital revolution in healthcare will either be a collaborative effort built on transparency, or it will be a source of persistent, systemic conflict.