Executive Summary: The Paradox of Precision

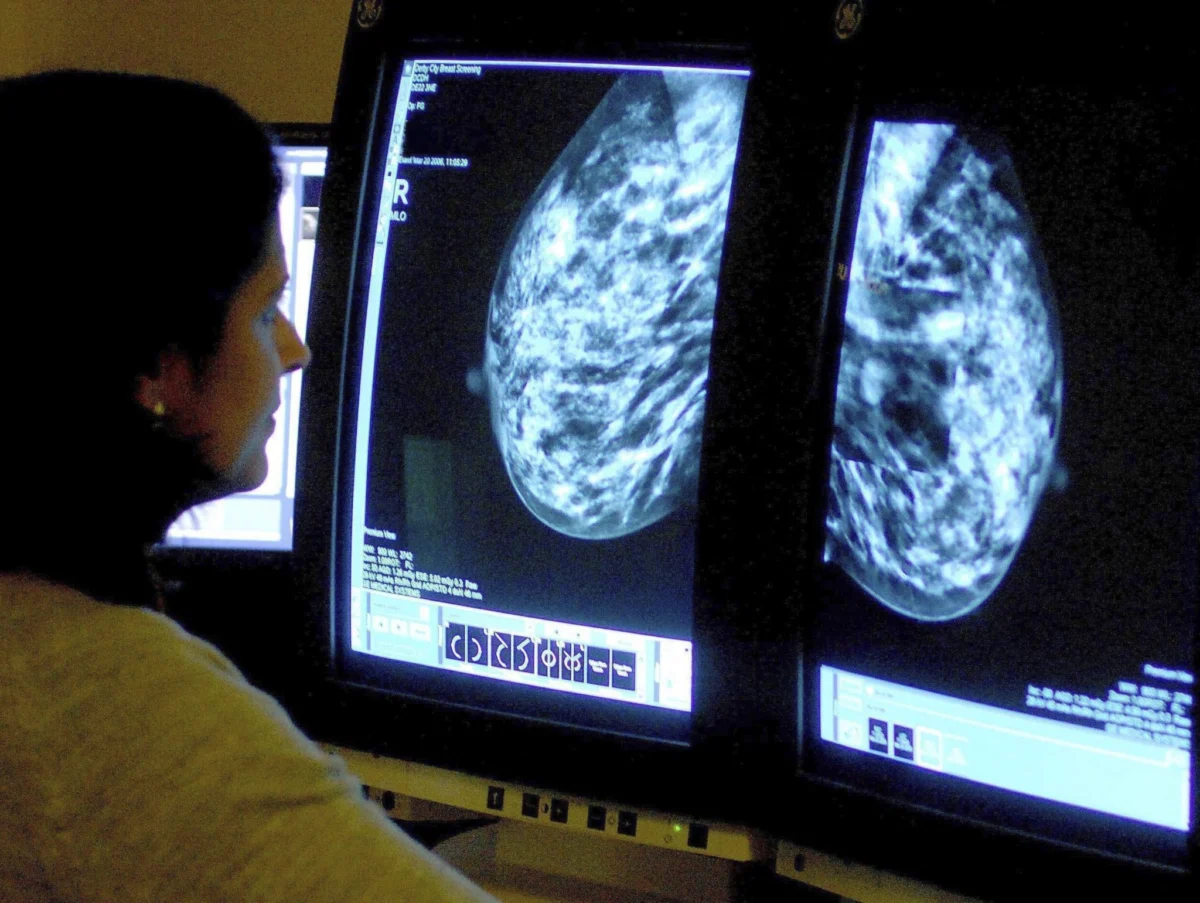

Artificial Intelligence (AI) has ushered in a transformative era for oncology, particularly in the realm of breast cancer screening. By leveraging deep learning models to interpret mammography and stratify patient risk, these technologies offer the promise of unprecedented diagnostic accuracy and workflow efficiency. Yet, as these systems become deeply embedded in clinical practice, a growing body of research—including 2025 and 2026 analyses—warns of a latent danger: "automation complacency."

While AI can reduce false positives and streamline the evaluation of complex imaging, its presence alters the fundamental dynamics of clinical decision-making. As the healthcare industry faces a looming physician shortage and chronic burnout, the temptation to rely on AI as an automated "safety net" is strong. However, experts caution that without rigorous oversight and the preservation of human diagnostic intuition, the very tools designed to save lives could inadvertently undermine the quality of patient care.

The Chronology of AI Integration in Oncology

The trajectory of AI in medical imaging has shifted rapidly from theoretical research to clinical application.

- 2020–2024 (The Proof of Concept): Early studies established that deep learning algorithms could match the diagnostic prowess of radiologists in controlled laboratory settings. These years focused on refining convolutional neural networks to identify subtle calcifications and masses in mammograms.

- 2025 (The Ethical Reckoning): As AI systems began moving into pilot clinical environments, the focus shifted from "can AI do it?" to "how should humans work with it?" The publication of landmark studies in AI and Ethics highlighted the psychological phenomenon of automation complacency, marking the start of a critical discourse on clinical trust.

- 2026 (The Operational Reality): Large-scale implementation studies, particularly those involving Google-developed systems, demonstrated that AI could serve as an effective "second reader." This year marked the transition to assessing how AI impacts real-world clinician workflows, revealing the complexity of human-AI collaboration in high-pressure settings.

Supporting Data: The Promise and The Peril

Gains in Detection and Efficiency

The quantitative benefits of AI in breast cancer screening are compelling. Recent research indicates that AI-assisted mammography significantly reduces variability between clinicians. By acting as a triage tool, AI can prioritize high-risk cases, allowing radiologists to focus their cognitive energy where it is needed most.

- Diagnostic Accuracy: Studies published in JAMA have shown that deep learning models frequently outperform traditional clinical risk models. They are particularly adept at identifying patterns that the human eye might miss, leading to a reduction in both false negatives (missed cancers) and false positives (unnecessary biopsies).

- Workload Optimization: For departments struggling with high volumes, AI acts as a force multiplier. By filtering out clear "normal" screenings, the AI system reduces the administrative and diagnostic workload, providing clinicians with more time to focus on complex, ambiguous cases.

The Phenomenon of Eroded Vigilance

Despite these gains, the "trust" factor remains a significant point of contention. A 2025 study examining diagnostic accuracy found that when clinicians are provided with an AI recommendation, they are susceptible to "algorithmic bias." In instances where the AI suggests a diagnosis, clinicians may suppress their own correct, independent judgment to align with the machine, or conversely, become so reliant on the machine that their individual diagnostic vigilance wanes.

A systematic review scheduled for publication in June 2026 reinforces these findings. It notes that when disagreements occur between a human and an AI, the "arbitration" process—deciding who is right—adds a layer of cognitive burden. If the AI is "usually right," the clinician may eventually stop challenging the system, creating a dangerous feedback loop where errors are overlooked by both parties.

Workforce Shortages: The Silent Driver of Adoption

The push for AI adoption is not merely a search for technical excellence; it is a response to a looming crisis in the healthcare workforce.

The Burnout Epidemic

In 2025, approximately 41.9% of physicians reported symptoms of burnout. Burnout is a critical variable in patient safety, directly linked to an increase in medical errors and a decrease in patient satisfaction. As radiologists and oncologists face increasing pressure to process more images in less time, AI presents itself as a necessary relief valve.

The 2036 Projections

The urgency is compounded by long-term workforce projections. The healthcare industry is expected to face a shortage of up to 86,000 physicians by 2036. This shortage is driven by a "perfect storm":

- An Aging Population: A higher incidence of age-related cancers necessitates more screening.

- Increased Demand: Growing access to healthcare services increases the volume of diagnostic imaging required.

- Shrinking Workforce: A combination of retirements and a slow pipeline of new medical professionals is creating a capacity gap that only technology seems capable of bridging.

However, researchers warn that using AI as a "quick fix" for staffing shortages is a hazardous strategy. If AI is implemented as a replacement for human oversight rather than a supportive tool, the risk of automation complacency rises, potentially leading to systemic errors that could affect thousands of patients.

Official Responses and Expert Consensus

Leading medical institutions and researchers are now calling for a "Human-in-the-Loop" standard. The consensus among clinical experts is that while decision-support systems are vital for the future of oncology, they must be integrated with caution.

- Preserving Clinical Judgment: The JAMA analysis emphasizes that AI should be viewed as a consultant, not a final authority. Clinicians are encouraged to practice "active clinical engagement," where they perform an independent evaluation before reviewing the AI’s output.

- Mitigating Cognitive Overload: Experts argue that poorly designed interfaces can lead to "alert fatigue," where clinicians ignore warnings because they are overwhelmed by data. Effective integration requires that AI insights be presented in a way that respects the clinician’s existing workflow and decision-making rhythm.

- Accountability Frameworks: There is a growing demand for clear legal and ethical guidelines regarding "who is responsible" when an AI-assisted diagnosis leads to a poor outcome. Current discussions suggest that the final responsibility must remain with the clinician, necessitating that they remain fully informed and engaged with the AI’s logic.

Implications: The Path Forward

The integration of AI into breast cancer care is an irreversible trend, but the manner in which it is integrated will determine its success or failure.

1. Training and Education

Medical schools and residency programs must begin incorporating "AI Literacy" into their curricula. Future radiologists must be trained not only in pathology but also in understanding the limitations, biases, and psychological pitfalls of working with automated systems.

2. Design for Human-AI Synergy

Developers must prioritize "explainable AI" (XAI). Instead of providing a binary "cancer/no-cancer" output, systems should highlight the specific regions of interest and the confidence level of the prediction. By showing their "work," these systems can help clinicians maintain a healthy level of skepticism and engagement.

3. Continuous Monitoring

Hospitals should implement ongoing audits of human-AI interactions. By monitoring how often clinicians agree with the AI and, more importantly, how often they disagree, institutions can identify if "automation complacency" is taking root in their specific department.

4. A Balanced Perspective

Ultimately, the goal is to reach a state of "augmented intelligence." This is a state where the AI handles the high-volume, routine screening tasks, allowing the clinician to apply their expertise to the most complex and nuanced cases. If we can successfully balance the efficiency of machines with the judgment of humans, we may solve the dual problems of workforce shortages and diagnostic accuracy. If we fail, we risk trading the wisdom of the expert for the efficiency of the algorithm, a bargain that patients cannot afford.

The road ahead is not about choosing between human or machine; it is about cultivating a collaborative partnership. By prioritizing transparency, training, and continuous clinical oversight, the medical community can ensure that AI serves as a powerful instrument of healing, rather than a catalyst for complacency.