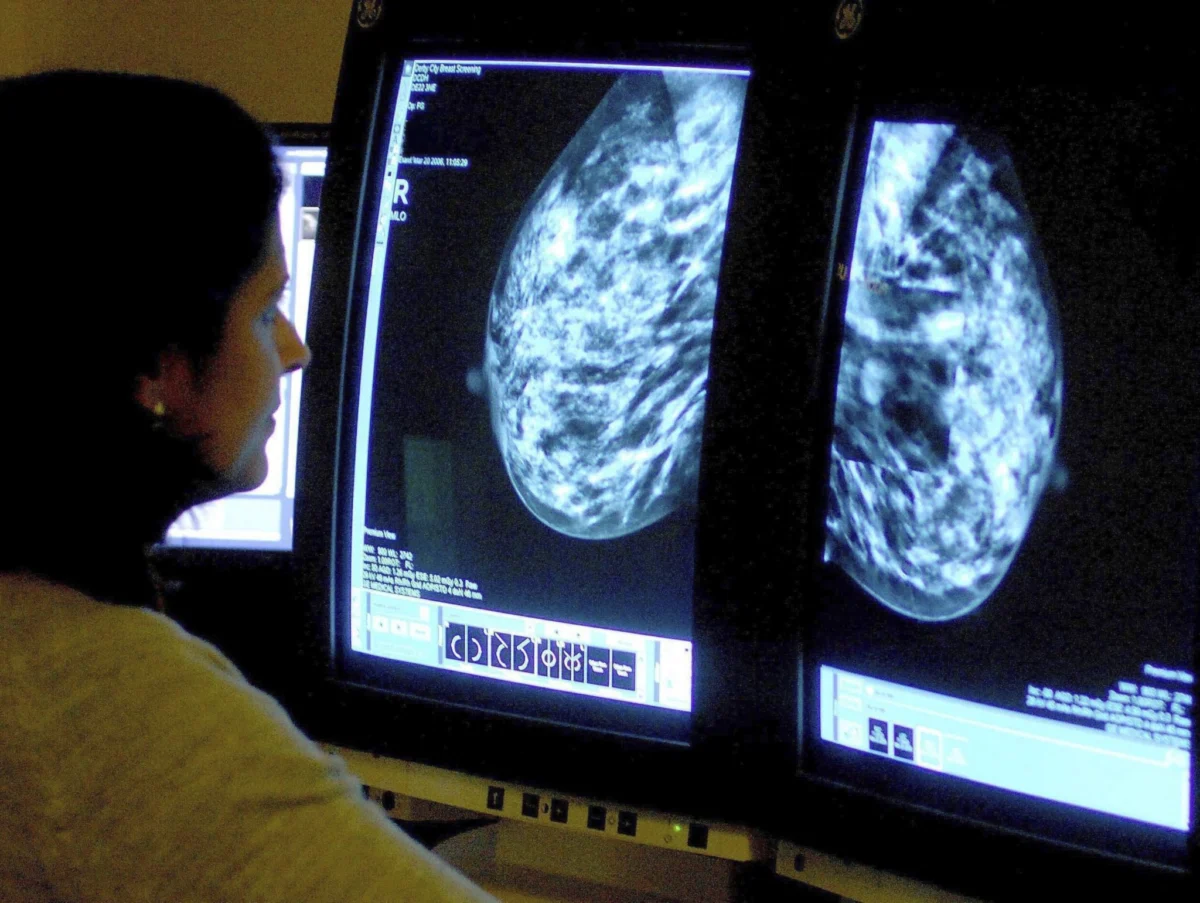

As artificial intelligence (AI) transitions from an experimental novelty to a cornerstone of modern oncology, the healthcare sector finds itself at a critical crossroads. While the integration of deep learning models in breast cancer screening has yielded unprecedented gains in diagnostic accuracy and operational efficiency, a growing body of research warns that this technological leap carries a hidden cost: "automation complacency."

As clinicians increasingly rely on algorithmic guidance to manage overwhelming patient volumes, the medical community is grappling with a profound question: How can we harness the immense power of AI without eroding the essential, independent judgment that defines high-quality clinical care?

Key Takeaways

- Performance Gains: Recent studies confirm that AI-driven mammography systems can match or exceed human performance in detecting breast cancer while reducing false positives.

- The Complacency Trap: Researchers warn that "automation complacency" occurs when clinicians defer too readily to AI, potentially weakening their own diagnostic vigilance.

- Workforce Pressure: Rising physician burnout and projected shortages are accelerating AI adoption, creating a tension between efficiency needs and the risk of human error.

- The "Second Reader" Model: AI is proving most effective as a "second reader," though this integration requires new frameworks for accountability and oversight.

The Evolution of AI in Oncology: A Chronology

The integration of AI into breast cancer screening has accelerated rapidly over the last several years, evolving from basic image analysis to sophisticated, risk-stratification engines.

- 2020–2024: The Early Promise: Early studies demonstrated that deep learning models could identify subtle patterns in mammography that were often invisible to the human eye. These tools initially served as experimental triage systems.

- 2025: The Ethical Turning Point: A landmark analysis published in AI and Ethics formally identified the phenomenon of "automation complacency." For the first time, the literature shifted from focusing solely on algorithmic accuracy to investigating the human-AI interface.

- Early 2026: Validation of Efficacy: Major publications, including JAMA and Nature, released findings indicating that Google-developed mammography systems could outperform radiologists in high-volume environments, effectively acting as a "second reader" to catch cancers that might otherwise be missed.

- Mid-2026: The Shift toward Workflow Integration: As of June 2026, the discourse has pivoted to systematic reviews of clinical workflows. Emerging research suggests that the presence of AI has fundamentally altered the decision-making dynamic in radiology departments, necessitating new clinical protocols.

Supporting Data: Efficiency vs. Vigilance

The clinical utility of AI is supported by a robust set of data, yet the data also highlights the dangers of overreliance.

Gains in Detection and Efficiency

Research published in JAMA underscores the transformative potential of deep learning models. These models have proven capable of predicting breast cancer risk with higher accuracy than traditional clinical risk models. In high-volume screening environments, these tools have served as an invaluable "second pair of eyes," reducing the burden on radiologists and mitigating the fatigue-related variability that often plagues human interpretation.

The Cost of Trust

Conversely, a 2025 study measuring diagnostic accuracy against clinician self-reported trust revealed a troubling trend: when an AI system is perceived as highly accurate, clinicians are more likely to override their own correct initial judgments to align with the AI’s recommendation. This "trust bias" suggests that as AI performance improves, the risk of human error through passive acceptance increases. A systematic review due in June 2026 notes that when disagreements occur between human and machine, the time required to resolve these discrepancies can actually increase, potentially negating some of the efficiency gains promised by the technology.

Workforce Dynamics: The Pressure to Automate

The rapid adoption of AI is not happening in a vacuum. It is being driven by a perfect storm of systemic pressures within the healthcare industry.

The Burnout Crisis

According to 2025 data from the American Medical Association (AMA), approximately 41.9% of physicians report symptoms of burnout. This crisis is directly linked to increased medical errors and a decline in patient satisfaction. With hospitals facing a projected shortage of up to 86,000 physicians by 2036, administrators are viewing AI as a critical infrastructure upgrade necessary to maintain basic levels of service.

Cognitive Overload

While AI is intended to alleviate workload, researchers caution that poor integration creates a secondary problem: cognitive overload. When clinicians are presented with complex, AI-generated risk scores alongside traditional imaging, they must expend significant mental energy interpreting the machine’s output. If the system is not designed to support intuitive decision-making, it may inadvertently place clinicians in a position where they are forced to choose between efficiency and the deeper, nuanced analysis required for high-stakes oncology cases.

Official Responses and Expert Consensus

The medical community remains cautiously optimistic, but calls for guardrails are growing louder. Leading medical institutions have begun advocating for "Human-in-the-Loop" (HITL) frameworks.

"The goal of AI in oncology should be to augment human intelligence, not replace it," states one leading researcher in the AI and Ethics report. "We are seeing that the most successful departments are those that treat AI as a junior partner—a tool that provides information but is subject to the rigorous, final oversight of a board-certified physician."

Furthermore, clinical experts are increasingly suggesting that medical training must evolve. The next generation of radiologists and oncologists will require training in "algorithmic literacy"—the ability to understand the limitations, biases, and probabilistic nature of the AI tools they are using.

Implications for the Future of Healthcare

The implications of this technological shift are profound, touching upon the core tenets of medical ethics, professional accountability, and the nature of the doctor-patient relationship.

1. The Accountability Gap

When an AI system misses a diagnosis or provides a false positive, who is responsible? Current legal and ethical frameworks remain anchored in human oversight. As AI takes on a larger role in patient-facing workflows, hospitals must clearly define the chain of command. If a clinician follows an AI’s incorrect suggestion, the liability remains with the human, yet the systems are often "black boxes" that are difficult for the human to audit in real-time.

2. Preserving Clinical Intuition

There is a fear that if we rely too heavily on algorithmic outputs, we may lose the "clinical intuition" that comes from years of experience—the ability to identify rare pathologies that the AI has not been trained to recognize. Maintaining "active clinical engagement" is not just a safety measure; it is a vital part of medical skill retention.

3. The Path Forward

To balance efficiency with safety, the integration of AI must move beyond mere adoption toward thoughtful orchestration. This involves:

- Dynamic Calibration: AI systems that adjust their feedback based on the clinician’s level of experience.

- Transparency Protocols: Ensuring that AI tools provide "explainable" outputs, allowing clinicians to understand why a certain risk score was assigned.

- Regular Audits: Continuous monitoring of human-AI collaboration to identify if "automation complacency" is taking root within specific departments.

Conclusion

The integration of AI into breast cancer screening represents one of the most significant advancements in modern medicine, offering the promise of earlier detection and more personalized care. However, the technology is only as effective as the human oversight governing it. As we move forward, the priority must remain the preservation of the human element in medicine. AI can process the data, but it is the human clinician who must synthesize that data into a diagnosis, ensuring that the patient is treated as an individual, not just a set of pixels on a screen.

By fostering a culture of healthy skepticism and rigorous engagement, the medical field can ensure that AI becomes a partner in healing, rather than a surrogate for the expertise that patients trust with their lives.