For decades, the standard of care for patients living with heart failure (HF) has been deceptively simple: step on a scale every morning. If the numbers creep up, it is traditionally interpreted as a sign of fluid retention—a precursor to a potentially life-threatening exacerbation that could land a patient in the hospital. However, this method is fraught with limitations, including high false-alarm rates and a lack of sensitivity to early-stage decompensation.

New data presented at the European Society of Cardiology’s Heart Failure 2026 meeting in Barcelona suggests a technological leap forward. Findings from the observational TIM-HF3 trial indicate that artificial intelligence (AI)-based software, which monitors subtle acoustic changes in a patient’s voice, may significantly outperform weight monitoring in predicting impending heart failure hospitalizations.

The Science of Sound: Vocal Biomechanics and Fluid Overload

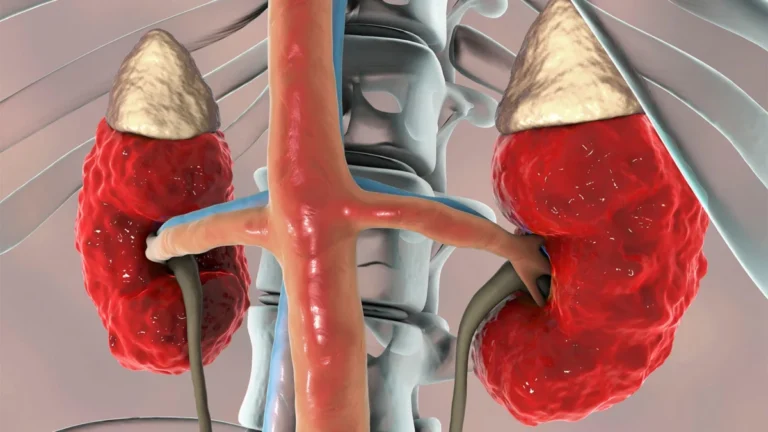

The premise behind the research is rooted in the physiological changes that occur when the heart struggles to pump effectively. As fluid accumulates in the lungs and systemic circulation—a hallmark of worsening heart failure—it alters the biomechanics of the vocal folds and the acoustics of the upper airway.

"Pulmonary and systemic fluid overload alter vocal fold biomechanics and upper airway acoustics in heart failure patients," explained Leonhard Riehle, MD, of Noah Labs in Berlin, during a late-breaking session at the conference. Riehle noted that prior clinical evidence has already established that cross-sectional acoustic signatures can successfully distinguish between stable and decompensated heart failure patients.

The TIM-HF3 trial focused on a specific, repeatable task: the sustained pronunciation of the /i/ vowel, as heard in words like "bee" or "green." This sound was selected for its consistency and its proven sensitivity to subtle shifts in airway congestion across both English and German speakers. The study sought to answer a pivotal question: Could longitudinal monitoring of this single sound prospectively predict hospitalization in a real-world, outpatient cohort?

Chronology: From Standard Care to Digital Innovation

The TIM-HF3 study enrolled 105 patients from three German clinical centers. All participants presented with NYHA class II/III symptoms and had a documented history of HF-related hospitalization within the previous 12 months. The study design utilized both an interventional and an observational framework to compare existing protocols against emerging technology.

The Standard-of-Care Arm

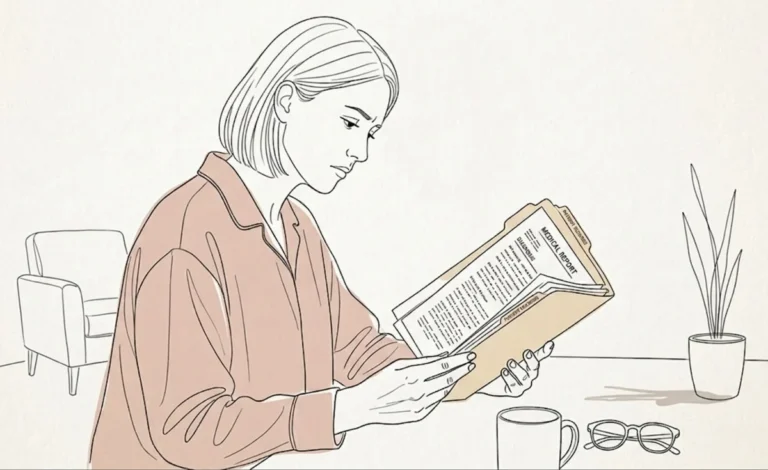

Participants in the standard-of-care group followed a rigorous, traditional regimen. They monitored their weight, blood pressure, and ECG results daily. These metrics were transmitted to clinicians, who reviewed the data in real time to identify potential red flags for fluid retention.

The Observational Voice-Monitoring Arm

In the observational arm, participants were asked to use a tablet to record five-second audio segments of themselves pronouncing the /i/ vowel once per week. Crucially, these recordings were not initially available to their clinical team. They were securely uploaded to a central server and analyzed retrospectively only after the trial concluded.

The AI algorithm generated a risk score by interpreting vocal features, adjusted for the patient’s age and sex. Alerts for hospitalization were triggered based on a patient’s individual standard deviation from their personal baseline. For the weight-monitoring group, alerts were triggered by a weight gain of at least 2 kg within three days or 5 kg within one week.

Supporting Data: Sensitivity and Accuracy

The results of the analysis were striking. Among the 92 outpatients included in the final data set, researchers observed 44 heart failure hospitalizations over a mean follow-up period of 10 months. When focusing on the 25 hospitalizations that had adequate voice-recording data, the disparity between the two methods was profound.

The voice-based algorithm achieved a sensitivity of 84.0% (95% CI 65.3–93.6%) in detecting these events. In contrast, the traditional weight-monitoring approach demonstrated a sensitivity of only 36.0% (95% CI 20.2–55.5%).

Beyond simple sensitivity, the timing and frequency of alerts also provided a compelling case for the voice-based approach:

- Early Warning: The median time between the AI alert and the subsequent hospitalization was 29 days for voice monitoring, compared to just 13 days for weight monitoring. This two-week head start could prove critical in allowing physicians to adjust medications—such as diuretics—before an emergency room visit becomes necessary.

- Fewer False Alarms: The yearly rate of unexplained or "false" alerts per patient was significantly lower for the voice tool (2.62) compared to weight monitoring (6.07).

- Comprehensive Detection: Data showed that 56 of the hospitalizations were detected by voice alone, while 92% were caught by the combined use of voice and weight, highlighting that voice analysis serves as a powerful, standalone biomarker.

Official Responses and Expert Commentary

The cardiology community has reacted to the TIM-HF3 findings with a mixture of excitement and scientific caution. William T. Abraham, MD, a prominent researcher from The Ohio State University, has long championed voice monitoring as a transformative tool for heart failure management. His team is currently developing HearO, a similar voice-analysis technology, and they are preparing to present the pivotal DETECT-HF trial results later this year.

"The good news is the totality of the data really supports the notion that there is something here: that vocal changes represent an important biomarker in the evaluation of heart failure patients," Dr. Abraham noted. However, he cautioned that the small sample size of the TIM-HF3 study and the weekly frequency of recordings might lead to "overfitting." He argued that while the findings are "highly supportive of the field," there is still work to be done to validate these results on a larger, more diverse scale.

Piotr Ponikowski, MD, PhD, of Wroclaw Medical University, co-moderated the session and was more direct in his assessment. "The lesson I learned is get rid of this weight [as a predictor], because it’s boring and insensitive," he stated. "Why don’t we only recommend voice tracing?"

While Dr. Ponikowski’s enthusiasm highlights the frustration clinicians feel regarding the inaccuracy of current monitoring, he also raised valid questions regarding the global scalability of the tool. Specifically, he questioned how an algorithm trained on German speakers might perform across different languages and cultural vocal patterns.

Implications: A New Paradigm for Patient Ownership

The transition from scale-based monitoring to voice-based analytics represents more than just a technological upgrade; it signals a potential paradigm shift in how patients interact with their chronic conditions.

Usability and Patient Empowerment

One of the most significant barriers to consistent monitoring in heart failure is "device fatigue." Many patients struggle to maintain a daily routine of weighing themselves, and more advanced tools—such as invasive hemodynamic monitors like CardioMEMS—require surgical implantation.

As Jozine ter Maaten, MD, PhD, of the University Medical Centre Groningen, pointed out, the voice-analysis tool is highly intuitive. "It seems like a very easily applicable tool for patients," she said, suggesting that the simplicity of speaking into a smartphone may encourage better adherence. Researchers are now investigating whether this ease of use helps patients feel more in control of their own health, thereby fostering better "ownership" of their symptoms.

Future Hurdles

Despite the promise, Dr. Riehle and his colleagues acknowledge that the industry is not yet ready to abandon traditional methods. Outcomes data from larger, prospective interventional trials are required before a clinical "paradigm shift" can be fully endorsed. Furthermore, the regulatory landscape remains a challenge; as of mid-2026, there are no voice-monitoring applications for heart failure currently approved for commercial use in the US or European markets.

As the field moves forward, the next phase of research will focus on expanding the linguistic and geographic reach of the AI algorithms. The upcoming European pivotal trial plans to incorporate Spanish and Dutch, alongside English and German, to ensure the tool’s diagnostic accuracy remains robust across diverse populations.

Conclusion

The TIM-HF3 trial serves as a clarion call for innovation in the management of chronic heart failure. While the humble scale has served its purpose for decades, the advent of AI-driven acoustic analysis offers a more sensitive, less intrusive, and potentially life-saving alternative. By turning a simple vowel sound into a sophisticated data point, the medical community may finally be on the cusp of predicting heart failure crises before they happen, moving from a reactive model of care to a truly proactive, digital-first future.