Main Facts: The New Corporate Imperative

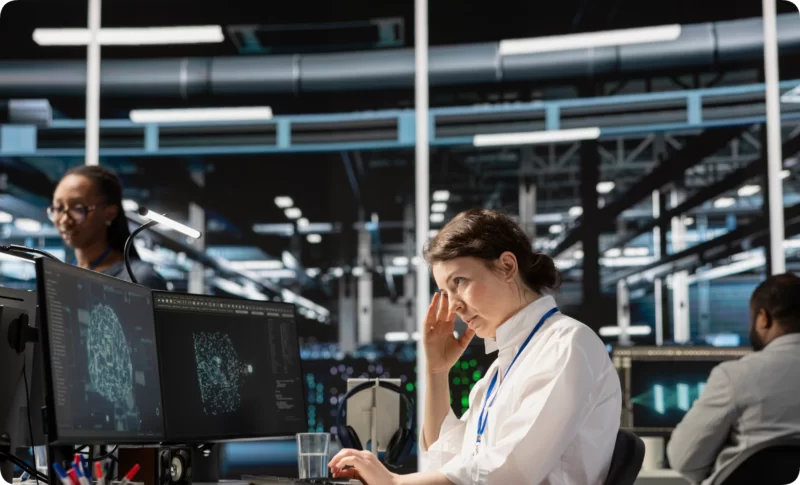

The integration of Artificial Intelligence (AI) into the modern landscape has moved past the phase of speculative novelty and into the realm of institutional mandate. According to recent reporting by The Wall Street Journal, the global workforce is currently undergoing a fundamental transformation where AI fluency is no longer a "bonus skill" but a core requirement for employment. Organizations are increasingly assessing AI proficiency during the hiring process, and annual performance reviews now weigh an employee’s ability to leverage automated tools to cut costs and drive productivity.

This shift has created a dual-track reality in the professional world. On one hand, companies are offering bonuses and incentives to "AI champions" who help their departments work smarter. On the other, a profound psychological phenomenon—"AI Anxiety"—is taking root. Current data suggests that one in three workers report significant stress regarding the possibility of being replaced by automated systems. As AI agents become more sophisticated, handling everything from medical scheduling to complex data analysis with startlingly "human" efficiency, the question for the modern professional has shifted from "if" AI will change their life to "how" they will survive the transition.

Chronology: From Novelty to Necessity

The timeline of AI’s integration into daily life has moved with a velocity that distinguishes it from previous technological revolutions, such as the advent of the personal computer or the internet.

- The Early Integration (Pre-2022): AI existed largely in the background, powering search algorithms, recommendation engines, and basic customer service bots. For most, it was a convenience rather than a competitor.

- The Generative Breakout (2022-2023): The public release of Large Language Models (LLMs) and image generators like ChatGPT, Midjourney, and DALL-E 3 marked a turning point. For the first time, the "creative" and "intellectual" moats of human labor seemed breachable.

- The Professional Pivot (2024-Present): As reported by Bindley and Blunt (2024), the corporate world shifted from "encouraging" AI use to "enforcing" it. This era is defined by the "AI Fluency" requirement, where 85% of companies now factor AI usage into their internal metrics for success.

- The Psychological Response: As the technology matured, the initial "wow factor" was replaced by a widespread sense of precariousness. By 2025, clinical psychologists began seeing a surge in patients presenting with specific anxieties related to technological obsolescence.

Supporting Data: The Metrics of Change

The scale of the AI transition is reflected in several key statistics that highlight both the opportunity and the threat perceived by the global workforce:

| Metric | Impact Level | Context |

|---|---|---|

| Workforce Anxiety | 33% (1 in 3) | Workers who fear total replacement by AI systems within the next five years. |

| Performance Integration | 85% | Percentage of firms that now include AI tool proficiency in formal performance evaluations. |

| Role Evolution | High Growth | While some roles are being phased out, a significant number of "AI-augmented" roles are being created for those who adapt. |

| Efficiency Gains | 20-40% | Average reported increase in productivity for knowledge workers using AI for drafting, research, and data analysis. |

These figures suggest that the "AI Divide" is becoming a primary driver of economic inequality. Those who lean into the technology are seeing their value compounded, while those who retreat due to anxiety face a heightened risk of career stagnation.

Official Responses: The Psychological Perspective

Experts in behavioral science argue that the primary barrier to AI adaptation is not technical, but emotional. Dr. Walter Matweychuk, a licensed psychologist and specialist in Rational Emotive Behavior Therapy (REBT), posits that how an individual perceives the "threat" of AI determines their professional longevity.

The REBT Framework

Rational Emotive Behavior Therapy, developed by Dr. Albert Ellis, suggests that our distress stems not from events themselves, but from the rigid beliefs we hold about those events. In the context of AI, Dr. Matweychuk identifies a clear distinction between Healthy Concern and Unhealthy Anxiety.

- Healthy Concern: Recognizes that AI is a disruptive force. This mindset leads to proactive behavior, such as taking a course on prompt engineering or finding ways to automate mundane tasks.

- Unhealthy Anxiety: Rooted in "must-urbatory" thinking—the belief that "This must not happen" or "I cannot stand this change." This leads to avoidance, catastrophizing, and eventually, professional obsolescence.

Addressing the Four Anxiety Traps

Clinical observation has identified four recurring "traps" that fuel AI-related distress:

- The Role Replacement Fear: The belief that "AI will steal my role and that must not happen." REBT reframes this by accepting that while roles change, the human ability to master new tools remains a constant value.

- The Obsolescence Catastrophe: Viewing a potential layoff as an "awful" end-of-the-world scenario. Reframing this involves recognizing that while job loss is difficult, humans have a long history of adapting to economic shifts.

- The Survival Threat: The feeling that an AI-driven world is "too threatening" to endure. Psychological flexibility training helps individuals see change as uncomfortable but bearable.

- The Relational Concern: The fear that AI companions will make human intimacy obsolete. Experts suggest a "both/and" approach, viewing AI as a tool for connection rather than a replacement for it.

Implications: The Future of Work and Human Agency

The long-term implications of the AI mandate suggest a fundamental restructuring of "Knowledge Work." As AI takes over the "heavy lifting" of data processing, drafting, and basic creative generation, the value of human labor will likely shift toward Critical Discernment and Strategic Oversight.

The Creative Frontier

In creative fields, tools like Midjourney and Stable Diffusion are not just automating tasks; they are acting as "ideation partners." The implication is that the "artist" of the future may act more like a "director," using AI to explore thousands of conceptual iterations before applying a final human touch.

The Ethical and Practical Safeguards

As AI becomes ubiquitous, the "Responsibility Gap" becomes a primary concern. Official guidelines for responsible AI usage now emphasize:

- Verification: The "hallucination" problem in AI means that human fact-checking is more critical than ever.

- Privacy: The risk of feeding sensitive corporate or personal data into public LLMs remains a top-tier security threat.

- Ethics: The potential for AI to be used in plagiarism or the creation of deceptive content requires a new framework of digital ethics.

Conclusion: Adapting to the Permanent Wave

The consensus among both tech leaders and psychological experts is clear: AI is not a passing trend; it is a permanent fixture of the human experience, comparable to the advent of electricity. The "AI Mandate" in the workplace is merely the first step in a broader societal integration.

For the individual, the path forward requires a "3-Step REBT Reset":

- Notice the Thought: Identify the rigid, fear-based belief about AI.

- Dispute the Belief: Question whether the catastrophic fear is realistic or helpful.

- Replace with Flexibility: Adopt a mindset that views AI as a "scalpel"—a tool that can either cut or heal, depending on the skill of the person holding it.

As we move deeper into this AI-driven world, the most valuable skill may not be coding or prompt engineering, but psychological flexibility. The ability to remain calm, curious, and adaptive in the face of exponential change will be the defining characteristic of those who thrive in the age of automation.

A Strategic Checklist for AI Integration

To transition from anxiety to mastery, professionals are encouraged to adopt the following best practices:

- Be Specific: Treat AI interactions like delegating to a highly capable but literal-minded intern.

- Iterate: Do not accept the first output; refine prompts to find the "sweet spot" of utility.

- Stay Informed: Dedicate at least 15-30 minutes a week to exploring new features in the AI ecosystem to avoid "technological debt."

- Maintain Human Oversight: Use AI to enhance critical thinking, never to replace it. The final decision—and the ethical responsibility for it—must remain human.

References:

Bindley, K., & Blunt, K. (2024). "Tech Firms Aren’t Just Encouraging Their Workers to Use AI. They’re Enforcing It." The Wall Street Journal.

Matweychuk, W. (2025). "AI Anxiety: Coping in an Automated World." Clinical Perspectives in REBT.