In the hallowed halls of academia, a citation is more than just a footnote; it is a thread in the grand tapestry of human knowledge. By referencing preceding work, researchers establish a "family tree" of ideas, protocols, and empirical evidence, ensuring that every new discovery stands on the shoulders of those who came before. However, a new, insidious trend threatens to unravel this foundation.

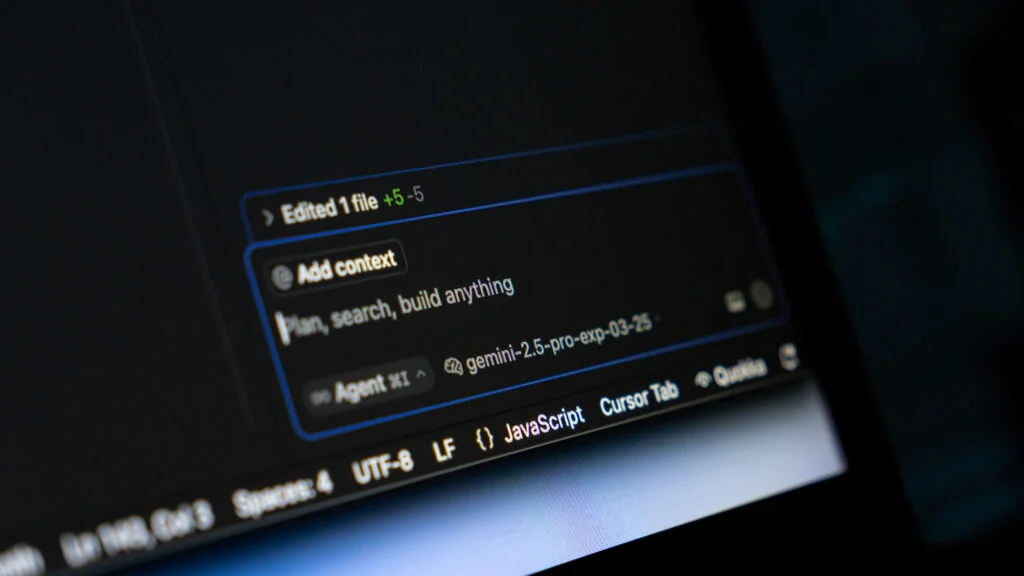

A growing number of academic papers are being published with "fabricated" citations—references to non-existent studies, journals, or authors. This phenomenon, detailed in a groundbreaking study published this Thursday in The Lancet, suggests that the rapid integration of generative artificial intelligence (AI) into the research workflow is introducing a new form of "slop" into the public record of science. As researchers grapple with this digital erosion, the scientific community is being forced to confront a difficult question: Is AI accelerating progress, or is it merely accelerating the production of misinformation?

The Genesis of the Problem: A Symptom of "Efficiency"

The study, led by Maxim Topaz, a nurse and health AI researcher at Columbia University, was born from a moment of personal professional vulnerability. While drafting an editorial for a medical journal, Topaz utilized an AI chatbot to assist with editing. Despite rigorous manual verification of his references, an editor eventually flagged a citation that simply did not exist.

"I was deeply embarrassed: I checked for that, and it still almost happened to me," Topaz admitted. This realization sparked a wider investigation. Analyzing over 2 million papers and 97 million citations, Topaz and his team identified approximately 4,000 fabricated citations embedded within 2,800 papers. While the raw number might seem small in the context of global scientific output, the trajectory is deeply alarming.

The Chronology of an Epidemic

The rise of hallucinated citations tracks almost perfectly with the democratization of large language models (LLMs) like ChatGPT.

- 2023: The phenomenon was rare, with approximately 1 in 2,828 papers containing at least one fabricated reference.

- 2025: The frequency surged to 1 in 458 papers—a sixfold increase in just two years.

- 2026 (Early data): In the first seven weeks of 2026, the rate escalated further, reaching 1 in 277 papers.

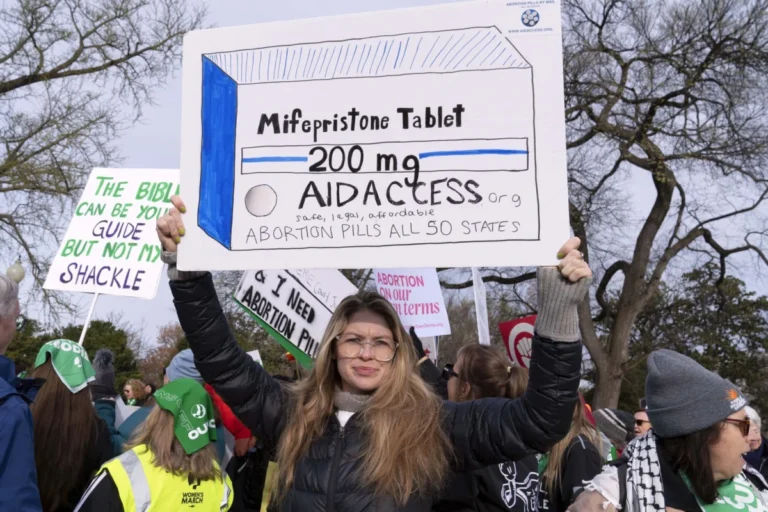

This upward trend coincides with a series of high-profile incidents. Last year, a widely publicized "MAHA" (Make Our Children Healthy Again) report regarding chronic disease priorities contained several erroneous citations that experts believe were generated by AI. Similarly, major publishers like Springer Nature have been forced to flag papers containing references to non-existent articles, turning what was once an anomaly into a common headache for editors and peer reviewers.

Supporting Data: Mapping the "Ghost" Literature

The data provided by Topaz’s research highlights that the issue is not distributed evenly across the academic landscape. By utilizing a newly developed dashboard, the research team found that more than a third of all fabricated citations originate from just two large, open-access publishers.

While Topaz declined to publicly name the specific publishers to avoid legal complications, he noted a distinct correlation: these organizations operate on a business model that relies heavily on charging authors high publication fees to bypass traditional paywalls. This "pay-to-publish" incentive structure, critics argue, prioritizes volume over rigor.

The problem, however, is not limited to these publishers. The Public Library of Science (PLOS) has acknowledged seeing "numerous" unverifiable references in submissions. Renee Hoch, head of publication ethics at PLOS, noted that incorporating automated detection tools into workflows is challenging. "In piloting options thus far, we have seen a lot of false positives," Hoch explained, citing issues with formatting, multilingual sources, and incomplete data in common literature databases.

The Cultural Shift: From Reflection to Prompting

The technical failure of AI models to "truth-check" their own output is only half the battle. The deeper issue, according to experts, is a cultural shift in how scientists engage with literature.

Mohammad Hosseini, a professor at Northwestern University who specializes in research integrity, argues that the proliferation of hallucinated citations is a symptom of a flawed scholarly evaluation model. "It shows that there are people who don’t even want to spend half an hour to check the references of a paper," Hosseini stated. "It shows how fast they want to get published and how desperate they are to get published."

The Death of the Reflective Process

Historically, the act of citing was a reflective, deliberate process. A researcher would read a study, analyze its relevance, and determine how it contributed to the current work. Today, the process is increasingly transactional. A researcher may simply prompt an AI tool to "provide citations regarding X," and the AI, prone to hallucination, provides a list of seemingly authoritative but entirely fictional sources.

"People simply use their hunches to prompt ChatGPT or other AI tools, and then they have a bunch of citations that they can sprinkle over their papers," Hosseini warned. "That is not a healthy practice. The engagement with the literature is becoming increasingly more superficial, and that is neither good for the researcher, nor for society, nor for our publication practices."

Official Responses and Defensive Measures

Major players in the publishing industry are aware of the threat, though their responses vary in intensity and efficacy.

- The Science Family of Journals: A spokesperson for the Science journals confirmed they utilize an automated tool to check references against known databases. Currently, they report that they have not encountered a published paper containing a fabricated citation, suggesting that their existing guardrails are effective.

- NEJM and JAMA: Similarly, the New England Journal of Medicine and JAMA have implemented validation tools. Both organizations emphasized that, ultimately, the burden of truth rests on the author. By signing the submission forms, authors attest to the accuracy of their work, meaning that AI-generated hallucinations are not just an academic error—they are a breach of professional ethics.

Despite these measures, the sheer volume of submissions makes total prevention difficult. Many editors are currently overwhelmed, and as the Lancet study suggests, the "slop" is leaking through the cracks of even the most rigorous editorial boards.

Implications: A Threat to the Scientific Foundation

The consequences of this trend are far-reaching. Systematic reviews and clinical guidelines—which serve as the bedrock for public health policy and medical treatment—rely on the integrity of the citation chain. If these reviews incorporate studies built upon "ghost" foundations, the reliability of the entire medical consensus begins to fracture.

Furthermore, the "polluting" of the public record creates a long-term problem for researchers. Future AI models, which are trained on existing scientific literature, will inevitably "learn" from these fabricated citations. This creates a feedback loop: AI generates a fake citation, the fake citation is published, and the next generation of AI uses that publication as a factual source.

Misha Teplitskiy, a sociologist of science at the University of Michigan, summarizes the danger: "One question that all of us have is: ‘Is AI making science more efficient, helping us do better work, or even the same work, but faster, or is it just creating slop?’ This is one of the first papers that is telling us something about the quality of what is being produced with LLMs, and it is a signal of slop."

As the scientific community stands at this crossroads, the message from researchers like Topaz and Hosseini is clear: Efficiency cannot come at the expense of veracity. The future of scientific inquiry depends on the ability to distinguish between genuine discovery and the glitzy, synthetic output of an unthinking machine. Without a return to rigorous, human-led verification, the "family tree" of science risks being choked by weeds of its own making.